How Advanced Big Data Integration and Services Transform Fragmented Enterprise Data to Unified Intelligence for AI & Strategic ROI?

The Dawn of Unified Intelligence

Gone are the days when data was viewed simply as a byproduct of operations — in 2026’s enterprise landscape, it is the central currency of competitive prowess. As organizations hold more data than ever, the challenge of extracting value from that data is an ongoing conundrum for the C-suite. The problem is rarely a lack of data per se, but rather an inability to synthesize it. Siloed systems, outdated infrastructure, and disconnected teams generate blind spots that impede innovation and dim big-picture clarity. This is the point at which mastery of big data integration distinguishes market leaders from laggards.

McKinsey & Company developed a model called The Promise of Open Financial Data, which suggested that remediating bad customer-relationship-management data due to multi-silo fragmentation can cost as much as 20% of an average financial institution's revenue (source). The rule is simple: Be prepared, as businesses no longer manage isolated datasets but engineer integrated data ecosystems.

Moving From Broken Data to Cohesive Intelligence

Historically, departments worked in their own technological bubbles. Marketing worked on specific CRMs, operations used isolated ERPs, and finance managed different ledgers. Now, this fragmentation is a deadly liability. A solid data integration strategy that tears down these silos is key to moving from fragmented data to unified intelligence. When the various strands of data are woven into a single, unified narrative, leadership receives a 360-degree view of the organization that empowers proactive rather than reactive decision-making.

Start with Digital Transformation and AI Integration

Without a data foundation, digital transformation and artificial intelligence are empty buzzwords. In contrast, AI algorithms rely on a vast amount of diverse and carefully curated data to become effective, leading to accurate predictions. The AI-ready data infrastructure is underpinned by automated data pipelines that nourish clean, federated data into analytical models. Without that integration layer, machine learning projects inevitably come to a halt, generating biased or incorrect insights with negative financial and strategic implications.

What Will Enterprise Leaders Take Away from This Guide

Created by experts at DATAFOREST, this in-depth guide is a valuable resource for enterprise architects, IT consultants, and C-level executives. We will break down what big data integration is and how it impacts business, examine essential architectural patterns, and show how top companies realize technical integrations with real board-level ROI.

Contact DATAFOREST and get a consultation for your data architecture needs.

What Is Big Data Integration?

Understanding data consolidation and aggregation at scale is a key to unlocking the full value of enterprise assets.

Definition and Core Principles

Simply put, big data integration is the systematic practice of bringing together large-scale and complex datasets from disparate sources into a single, comprehensive view. It includes data ingestion and transformation, guaranteeing that data (structured, semi-structured, or unstructured) is cleaned, transformed, and sent to a repository layer such as a data warehouse or data lake. The principles of data integration in big data center on velocity, volume, and variety, as well as requiring automated frameworks capable of maintaining the fidelity of the data while also operating within the limits of an enterprise scale.

Types of Data That Need to Be Integrated

A modern integrated data ecosystem needs to combine multiple data typologies, including:

- Structured Data: Standardized, organized data, particularly from relational databases, financials, and legacy ERPs.

- Semi-Structured Data: Logs, XML & JSON files from web apps, sensors, and API system integration.

- Examples of Unstructured Data: Text documents, social media streams, audio, and video files.

- Streaming data: real-time, high-velocity data generated by various sources such as IoT devices and technologies like financial trading platforms.

Difference between Big Data Integration and Traditional Data Integration

Common enterprise data integration approaches are based on scheduled batch processes that physically move predictable and structured data into rigid relational databases (as ETL). On the other hand, big data integration — or big data data integration in enterprise terminology — and processing leverage dynamic, highly scalable architectures to process large volumes and varying formats of data. In order to achieve real-time data integration and continuous analytics, big data integration employs batch and stream processing paradigms as well as distributed computing frameworks.

How Big Data Integration Has Become a Board-Level Priority

The discussion of enterprise data integration has left the IT server room and is solidly in the boardroom now.

The True Cost of Data Silos

Data silos are not just an IT headache; they’re a crippling financial drain. When departments are working off different sources of truth, organizations experience duplicate efforts, compliance issues, and missed revenue opportunities. According to McKinsey research, a well-architected data ecosystem reduces data spend by 30% and decreases new data product time-to-market by 40% (source). Comprehensive big data integration services act directly to mitigate each of these systemic inefficiencies.

Enabling Reliable Executive Decision-Making

Executives can't run a multinational enterprise on instinct or dusty, weekly reports. An enterprise data architecture that provides a consolidated data platform is indispensable for sound strategic decisions. When leadership can utilize real-time, verified, holistic dashboards, they can pivot supply chains, revise pricing models, and reallocate capital with complete assurance.

Integration as a Competitive Differentiator

In over-saturated markets, your data becomes your best competitive moat. Successfully implementing big data integration capabilities gives organizations a competitive edge by spotting micro-trends faster, customizing customer experiences, and enhancing operational efficiencies faster than the competition. We view the market-leading approach of making data a strategic asset as an essential part of our DATAFOREST philosophy, which we outline on the DATAFOREST About Us page.

Essential Infrastructural Challenges of Big Data Assimilation

While the advantages are clear, executing an integration strategy successfully is not straightforward. The first step you should take toward resolving these issues is to understand big data integration challenges.

Legacy Systems and Technical Debt

Most Fortune 500 companies are burdened by decades of technical debt. Legacy on-premise mainframes require complex middleware and enterprise resource planning (ERP) data integration methods to operate seamlessly with contemporary cloud programmes. Running contemporary data through obsolete pipes pretty much always creates intense latency and data loss.

Hybrid and Multi-Cloud Complexity

Few modern enterprises depend upon a single cloud provider. A hybrid data integration solution has to enable a seamless connection of assets within AWS, Azure, Google Cloud, and on-premise servers. This necessitates a highly resilient big data integration framework to manage access, latency, and egress costs that span these distributed environments.

Data Quality and Consistency Issues

When processing large amounts of data, even small mistakes get magnified. Downstream analytics might become corrupted by inconsistent formatting, missing fields, and duplicate records. During the data ingestion and transformation, it is essential to put stringent data quality rules in place to ensure a reliable single source of truth.

Scalability and Performance Requirements

As the volume of data in an enterprise continues to grow exponentially, the infrastructure running this data must also scale elastically. In systems architecture, scalable data integration means that spikes in data movement — like surges during peak retail holidays — don’t crash the pipeline integrators and hold up key real-time reporting.

Governance, Security, and Compliance Requirements

As GDPR, CCPA, and new worldwide data frameworks proliferate, cross-border data integration becomes a huge compliance risk. At the enterprise level, data infrastructure must naturally provide stringent access controls, enable visibility of data lineage, and offer encryption at rest and in transit.

Enterprise Data Integration Architectures: Imperatives and Options

Choosing the right architecture for big data integration determines your business's agility and scalability. Let’s talk about the main strategic options for enterprise IT consultants and their architects.

Centralized Architecture (Data Warehouse Model)

However, the conventional centralized paradigm consolidates structured data into one large enterprise data warehouse (EDW). While traditional BI toolsets are great for reporting and analyzing historical trends, this model becomes rigid and costly when trying to process large volumes of unstructured or streaming data.

Data Lake and Lakehouse Architectures

EDWs have limitations, and this is where organizations shift towards data lakes, which store the raw data in its native format. Its modern variant is the Data Lakehouse, which offers you the (almost) limitless storage of a lake with the governance and structured Querying of a warehouse. A perfect balance of this can be achieved by implementing a Databricks architecture.

Distributed and Federated Architectures

Federated architectures provide a virtual layer of data integration for organizations that cannot physically merge the data due to regulatory or geographical reasons. Rather than moving the data to a centralized database, queries are distributed to localized databases, and results are aggregated in a central database.

Hybrid Architectures for Legacy Modernization

The majority of businesses have a hybrid architecture, where legacy on-premise systems integrate with newer cloud environments. This method makes use of APIs, microservices, and cloud data integration solutions to connect the old with the new so organizations can take their time modernizing without breaking core operations.

Real-Time vs Batch Integration Architectures

Batch processing vs stream processing is determined by business requirements.

- Batch Processing: Best suited for high-volume, scheduled processes (e.g., nightly payroll extraction).

- Real-Time Data Integration: Critical for taking immediate action (e.g., fraud detection, dynamic pricing, or live tracking of supply chain). Lambda or kappa architectures are often used as modern data pipeline architectures that can process both.

Why AI, Automation & Advanced Analytics Are Only Possible with Big Data Integration

Artificial intelligence models are as smart as the data they eat. Big data integration techniques are the unsung heroes of successful machine learning deployments.

Building Robust Foundations for AI and ML

Data scientists need clean, consolidated, and diverse feature sets in order to create accurate predictive models. An advanced data pipeline architecture automates such extraction and preparation, training models on enterprise-grade reality rather than shards.

Enabling Predictive and Prescriptive Analytics

With the help of integrated data, organizations can transition from descriptive analytics (what happened) to predictive (what will happen) and prescriptive (what course of action to take). Historical sales data, external market indicators, and real-time inventory levels can all be combined to arrive at accurate demand forecasts or pricing dynamics.

Supporting Real-Time Intelligent Systems

Sophisticated automation needs a feed-forward loop of data in real-time. A recommendation engine, for instance, needs to immediately cross-check a user’s current browsing behavior with their past purchases and the local stock levels of the entire world. This requires big data continuous integration pipelines that provide millisecond latency.

Enterprise Use Cases: Organizations Driving Value from Integrated Data

Theory needs to deliver real business value. Examples of integrated data usage — by DATAFOREST clients and the best enterprises.

Building a 360-Customer View in All Channels

In industries such as Retail, customer interactions are not limited to a single source of truth— customers journey across POS systems, e-commerce platforms, loyalty apps, and support tickets. A CDP / CRM Data Integration solution helps enterprises create a unified 360-degree customer profile.

Case study example: a major retailer used integration of eCommerce data management to correlate online and offline behaviors, driving tightly-targeted strategic marketing programs yielding enhanced lifetime customer value

Optimization of the Supply Chain and Efficacy of Operations

Global supply chains, to put it mildly, are complex. Enterprises can anticipate bottlenecks by integrating suppliers, logistics partners, and weather data through integration with their internal ERPs.

Example: We utilized advanced data parsing and integration to provide a manufacturing client with an integrated operations support dashboard, which helped in reducing holding costs of inventory as well as achieving on-time delivery.

Financial Analytics and Risk Monitoring

They need to have 100% accuracy and real-time visibility in order to manage liquidity and regulatory risk.

To illustrate: A unified reporting solution for the financial company aggregates millions of transactional data points, and this aggregated data is securely stored, leading to automated compliance reporting supported with instant fraud detection.

Real-Time Monitoring and Predictive Maintenance

In capital-intensive industries, unplanned downtime is disastrous. By combining IoT sensor data with historical maintenance records, companies can anticipate equipment failures in advance to build a more proactive predictive maintenance strategy instead of reactive repairs.

LeakageProof: Strategic Intelligence Through Internal and External Data

Integration isn’t just for internal systems. This, on the macroeconomic and social-sentiment side, adds new strategic foresight, allowing executives to adjust product strategies well ahead of market transformation. For example, an influencer marketing platform or email marketing SaaS platform depends heavily on integrating various external APIs to provide exact user analytics.

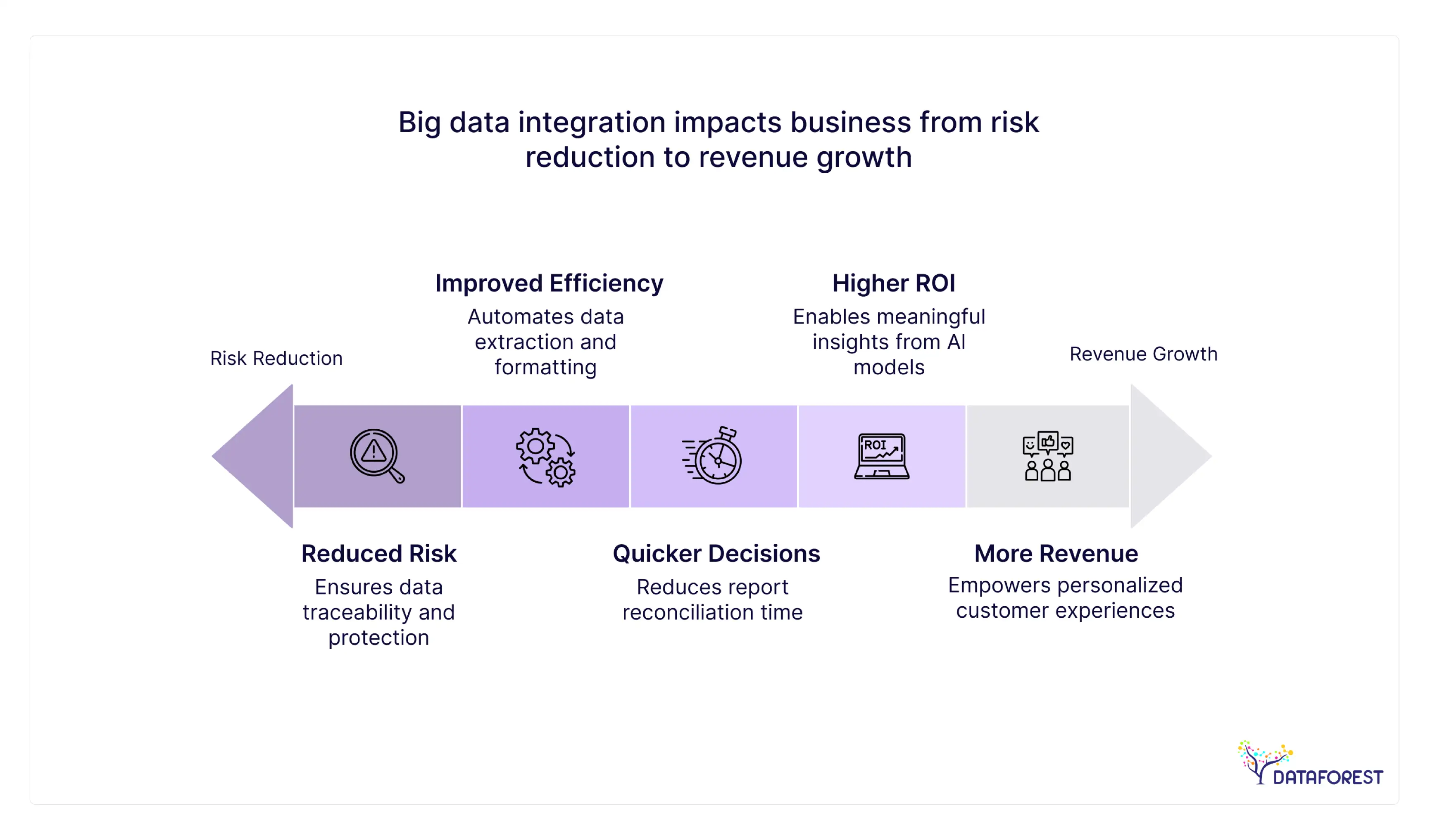

The Business Impact and ROI of Integrating Big Data

As the success of data architecture and DE consultancy often hinges on measurable returns, executive sponsorship can depend on this proof.

Quicker and More Accurate Strategic Decisions

When data is seamlessly integrated, time spent gathering and reconciling reports is reduced to zero. Dashboards showing the current status of the business and related performance measures help executives to reduce time-to-insight and decision implementation.

Improved Operational Efficiency and Automation

Automated data pipelines help to remove the manual toil of extracting and formatting any kind of data. This empowers overpaid data analysts and hyper-technical engineering types to spend their time on high-value predictive modeling, not gruntwork data plumbing.

More revenue due to improved customer intelligence

A 360-degree view across the customer journey enables precision around upselling, cross-selling, and churn mitigation. Integrated data ecosystems have a direct impact on top-line revenue as they empower hyper-personalized customer experiences at scale.

Higher Return on Investment from AI & Analytics Investments

Companies invest millions in AI efforts, but many may fail with the wrong data aggregation. A solid foundation enables these sophisticated analytical models to produce meaningful, actionable insights and increase the returns on investments in AI.

Reduced Risk and Improved Compliance

Centralized governance over unified data platforms allows sensitive information to be traceable, auditable, and protected per global standards, drastically eliminating regulatory fines and lost reputation.

The Pillars of Modern Digital Enterprises — Why Big Data Integration Is the Base Cement of It

To frame integration as an IT exercise is a grave miscalculation. It is the vital organ of the contemporary business.

Integration Makes Way for Scalable AI and Automation

As we move further into the AI era, scalable data integration will be a major bottleneck or order of magnitude driver for enterprise automation. Seamless communication between systems allows for autonomy that can operate at a scale beyond human execution.

Integrated Data Enables Faster Innovation

The innovation cycle accelerates when curated, integrated datasets are available to developers and data scientists for self-service access. New digital products, services, and revenue streams can be prototyped and brought to market in weeks instead of years.

Companies That Use Data to Its Fullest Potential Are a Step Ahead

The numbers speak for themselves: Data-Driven organizations outperform their peers. Those who invest in comprehensive data integration solutions create an unbeatable competitive advantage, functioning with a level of agility and foresight that siloed businesses cannot match.

The Strategic Imperative Moving Forward

For enterprises that wish to thrive through the latter half of this decade, moving from disjointed systems to a unified, intelligent data ecosystem is no longer optional. It takes architectural vision, technical talent, and a resolute mindset to view data as an organization’s greatest asset. When leading your organization through legacy modernization, cloud migrations, and getting your infrastructure ready for the latest in advanced AI, an appropriate integration strategy is the catalyst to sustainable success.

Connect with our experts to see how these architectures apply to your particular enterprise problems.

Schedule a consultation with DATAFOREST to define your individual integration roadmap.

FAQ

What kind of business value can companies look forward to from their investment in big data integration?

Not to mention, big data integration is directly responsible for increasing revenues and reducing operational costs. Companies can look forward to significantly shortened time-to-insight, increased accuracy in predictive analytics, better customer personalization, and removal of expensive manual data reconciliation processes. In the end, it engenders the confidence needed for decision-making at the highest levels.

How is traditional data integration different from big data integration?

Traditional integration is mostly concerned with structured data flowing through timed batch ETL processes into RDBMS. Big data integration, on the other hand, was built to deal with massive volume, velocity, and variety. It covers batch and real-time streaming, handles structured, semi-structured, and unstructured data across complex distributed systems and cloud environments.

How does big data integration apply in the digital transformation?

This is the very heart of digital transformation. If the underlying data is trapped in legacy silos, you cannot digitize and optimize business processes. Data integration models that work effectively bridge different systems, allowing the seamless exchange of data needed to drive contemporary SaaS apps, mobile experiences, and automated workflows.

Scalability of modern big data integration architecture

Incidentally, modern architectures that rely on cloud-native services and data lakehouses are highly scalable. This separation of compute from storage means enterprises can dynamically scale up processing power for peaks in data ingestion without locking into infrastructure costs that could become a bottleneck when throughput falls as processing completes, delivering high throughput until it doesn’t matter.

In-house built data integration capabilities or a partnership with experts.

Although some Fortune 500 companies have massive in-house data engineering divisions, collaboration with specialized consultancies can produce quicker, safer outcomes. Working with specialists to integrate your data solutions immediately gives you access to established frameworks that prevent expensive architectural missteps and skirt a crippling shortage of top-tier data engineering talent.

How can organizations start with big data integration?

What provides the best approach is to not “boil the ocean.” Begin by conducting a pilot to get a detailed view of your existing data landscape and discover high-value business use cases hampered by siloed data. Then, pick your suitable integration tools and shape an architecture around these needs. Executives in search of strategic insight can initiate a pilot project directly through the DATAFOREST contact form.

.webp)

.webp)

.webp)

.webp)