Core DevOps & CI/CD Concepts

DevOps

Definition: DevOps is a set of practices, cultural philosophies, and tools that combine software development (Dev) and IT operations (Ops) into a unified, collaborative workflow aimed at shortening the software delivery lifecycle and continuously delivering high-quality software. Rather than development and operations teams working in separate silos — where developers write code and 'throw it over the wall' to operations to deploy — DevOps unifies them around shared goals, shared tooling, and shared responsibility for the entire system.

For businesses, DevOps means faster feature releases, fewer production incidents, quicker recovery when things go wrong, and a team culture where everyone is invested in both building and running reliable software.

Technical Insight: DevOps is operationalized through the CALMS framework: Culture (shared ownership, blameless post-mortems), Automation (CI/CD pipelines, IaC), Lean (eliminating waste in delivery processes), Measurement (DORA metrics: Deployment Frequency, Lead Time for Changes, Change Failure Rate, Mean Time to Recovery), and Sharing (knowledge, tooling, on-call responsibilities). The DevOps toolchain spans version control (Git), CI/CD (Jenkins, GitHub Actions, GitLab CI), containerization (Docker, Kubernetes), IaC (Terraform, Ansible), and observability (Prometheus, Grafana, Datadog).

DevOps Culture

Definition: DevOps Culture refers to the organizational mindset, values, and behavioral norms that underpin successful DevOps adoption. It is the human side of DevOps — the recognition that tools and processes alone cannot transform software delivery without a corresponding shift in how teams collaborate, communicate, and take responsibility. DevOps culture emphasizes breaking down silos between development, operations, security, and business teams, replacing blame and fear of failure with psychological safety, continuous learning, and shared ownership.

Organizations that adopt DevOps tools without the cultural shift consistently fail to realize the promised benefits — because culture determines how people use tools, not the other way around.

Technical Insight: DevOps culture is measured and fostered through specific practices: Blameless Post-Mortems (analyzing incidents without assigning personal fault, focusing on systemic improvements), You Build It, You Run It (developers own their services in production, creating accountability for quality), Continuous Feedback Loops (production telemetry feeds back to development teams in real time), and Psychological Safety (team members can raise concerns and experiment without fear of punishment). The Westrum Organizational Culture model classifies cultures as Pathological, Bureaucratic, or Generative — research consistently shows Generative cultures correlate with elite DevOps performance.

DevOps Lifecycle

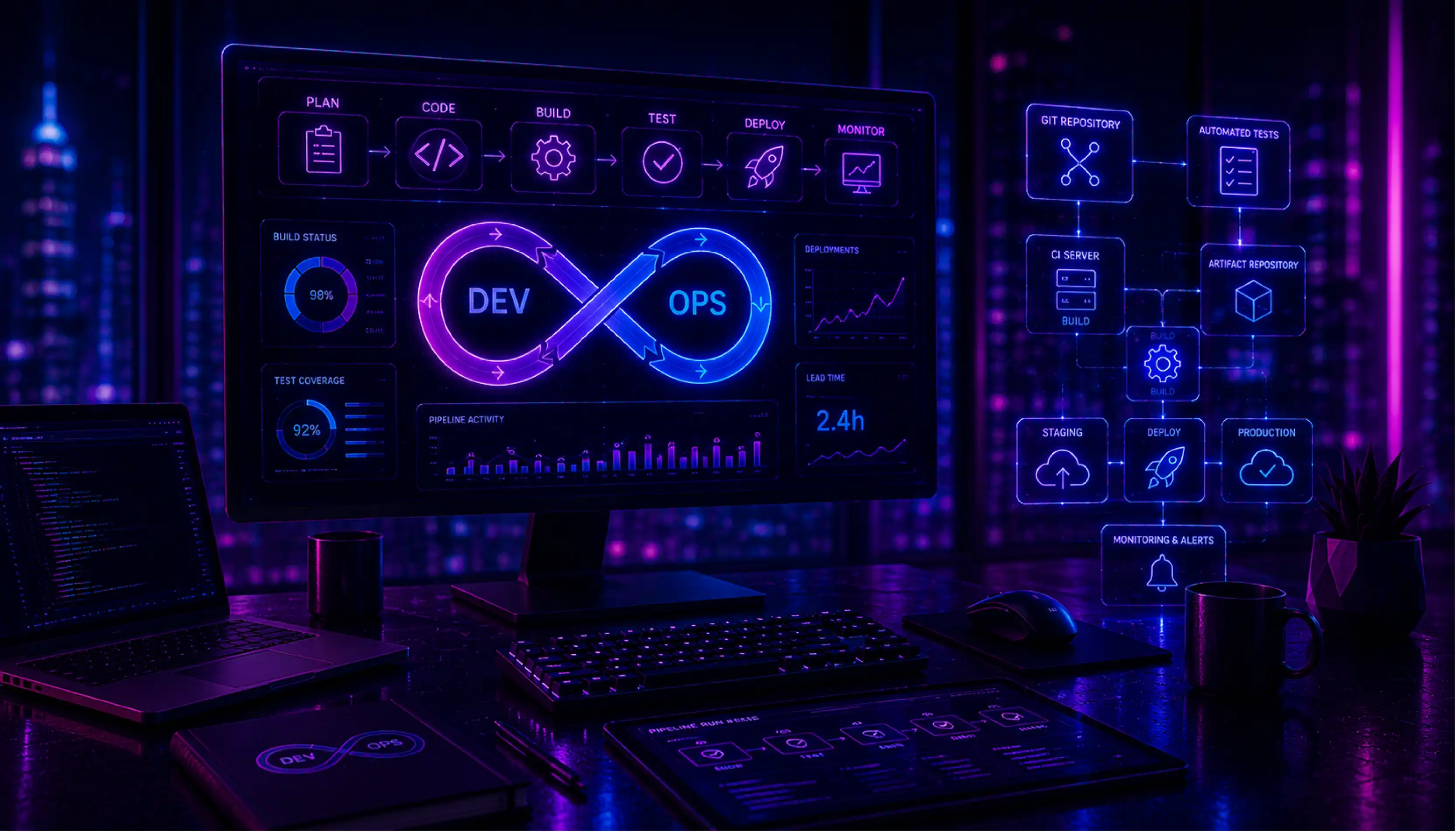

Definition: The DevOps Lifecycle is the continuous, iterative cycle of phases through which software moves from idea to production and back — representing how DevOps teams plan, build, test, deploy, operate, and monitor software in an integrated, repeating loop rather than as a one-way waterfall. The lifecycle is often depicted as an infinity symbol (or figure-eight) to emphasize its continuous, never-ending nature: learnings from monitoring feed back into planning the next iteration.

Understanding the DevOps lifecycle helps organizations identify where they have automation gaps, tooling deficiencies, or process bottlenecks that slow down delivery or degrade reliability.

Technical Insight: The eight phases of the DevOps lifecycle are: Plan (Jira, Linear — requirements and sprint planning), Code (Git, GitHub/GitLab — version control and code review), Build (Maven, Gradle, npm — compiling and packaging), Test (Jest, Selenium, JUnit — automated testing), Release (versioning, approval gates, release notes), Deploy (Kubernetes, Helm, Argo CD — deployment to environments), Operate (configuration management, incident response), and Monitor (Prometheus, Datadog, Grafana — observability and alerting). CI/CD pipelines automate the Build through Deploy phases, while IaC automates the Operate phase.

DevSecOps

Definition: DevSecOps is an extension of DevOps that integrates security practices, tools, and responsibilities directly into the software development and delivery lifecycle — rather than treating security as a separate gate at the end of the process. The core principle is 'shift left': move security testing and validation earlier in the development pipeline, where fixing vulnerabilities is 10-100x cheaper than discovering them in production.

In a DevSecOps model, every developer is responsible for writing secure code, security scans run automatically in CI/CD pipelines, and security teams act as enablers and advisors rather than gatekeepers who slow down delivery.

Technical Insight: DevSecOps is implemented through automated security tooling embedded in CI/CD pipelines: SAST (Static Application Security Testing — analyzing source code for vulnerabilities without running it; tools: SonarQube, Semgrep, Checkmarx), DAST (Dynamic Application Security Testing — testing running applications for vulnerabilities; tools: OWASP ZAP, Burp Suite), SCA (Software Composition Analysis — scanning third-party dependencies for known CVEs; tools: Snyk, Dependabot), Container Image Scanning (Trivy, Grype), and Secrets Detection (GitGuardian, TruffleHog — preventing API keys and credentials from being committed to code).

NoOps

Definition: NoOps (No Operations) is a concept describing the ideal end-state of automation where IT operations tasks are so fully automated that a dedicated operations team is no longer required for routine deployment, scaling, and maintenance activities. It represents the logical extension of DevOps and serverless computing: when infrastructure provisions itself, applications deploy automatically, and systems self-heal, the manual operational burden approaches zero.

In practice, NoOps does not mean zero operations staff — it means that operational complexity is abstracted away by cloud platforms and automation, freeing engineers to focus entirely on building product value rather than managing infrastructure.

Technical Insight: NoOps is enabled by fully managed cloud services that eliminate infrastructure management: Serverless compute (AWS Lambda, Google Cloud Run — no servers to provision or patch), managed databases (Aurora Serverless, Firestore — no DBA tasks), PaaS platforms (Heroku, Render, Fly.io — deploy code, not containers), and GitOps workflows (Argo CD, Flux — the Git repository is the single source of truth; any push automatically triggers deployment and reconciliation). The spectrum from DevOps to NoOps is defined by how much infrastructure responsibility the cloud provider absorbs.

AIOps

Definition: AIOps (Artificial Intelligence for IT Operations) is the application of machine learning and advanced analytics to automate and enhance IT operations processes — including event correlation, anomaly detection, root cause analysis, and incident response. As modern IT environments become too complex and fast-moving for human operators to monitor manually (producing millions of alerts per day), AIOps platforms use AI to filter noise, identify patterns, and surface actionable insights.

For organizations running large-scale cloud infrastructure, AIOps reduces mean time to detection (MTTD) and mean time to recovery (MTTR) for incidents, prevents outages through predictive failure detection, and dramatically reduces alert fatigue for on-call engineers.

Technical Insight: AIOps platforms (Dynatrace, Moogsoft, BigPanda, Splunk ITSI) ingest telemetry from across the IT stack — metrics, logs, traces, topology data, and change events — and apply ML algorithms to: Event Correlation (grouping thousands of related alerts into a single incident), Anomaly Detection (identifying unusual behavior in metrics and logs using statistical baselines or LSTM time-series models), Root Cause Analysis (using topology graphs and causal inference to identify the upstream source of failures), and Predictive Analytics (forecasting capacity exhaustion or failure probability before impact). Integration with ITSM tools (ServiceNow, PagerDuty) enables automated ticket creation and runbook execution.

CI/CD

Definition: CI/CD stands for Continuous Integration and Continuous Delivery (or Deployment) — a set of practices and an automated pipeline that enables development teams to deliver software changes frequently, reliably, and with minimal manual intervention. CI (Continuous Integration) means every code change is automatically built and tested when a developer pushes to the repository. CD (Continuous Delivery) means the tested code is automatically prepared for release; Continuous Deployment goes one step further and automatically deploys every passing change to production.

CI/CD is the technical backbone of modern software delivery: it eliminates the pain of infrequent, risky 'big bang' releases by making deployments small, frequent, and boring.

Technical Insight: A CI/CD pipeline typically stages: Source (Git push triggers the pipeline), Build (compile code, build Docker image), Test (unit tests, integration tests, SAST security scans — all run in parallel), Staging Deploy (deploy to a staging environment for end-to-end testing), and Production Deploy (automated or one-click release). Key metrics are pipeline duration (target: under 10 minutes for fast feedback) and pipeline pass rate. Branching strategies (GitFlow, trunk-based development) determine how code flows through the pipeline. Leading tools: GitHub Actions, GitLab CI, Jenkins, CircleCI, Argo CD.

CI/CD: Continuous Integration and Deployment

Definition: Continuous Integration (CI) is the practice of automatically integrating code changes from multiple developers into a shared repository multiple times per day, with each integration verified by an automated build and test suite. It eliminates the 'integration hell' of teams working on isolated branches for weeks, then trying to merge everything at once.

Continuous Deployment (CD) extends CI by automatically releasing every code change that passes all automated tests directly to production — without any manual approval step. This enables companies like Netflix, Amazon, and Google to deploy thousands of times per day, treating each deployment as a routine, low-risk event rather than a stressful, high-stakes release.

Technical Insight: CI is implemented by configuring a CI server (GitHub Actions, GitLab CI, Jenkins) to trigger on every push or pull request: checkout code, install dependencies, run the full test suite (unit, integration, contract tests), run code quality checks (linting, coverage thresholds), and report results back to the pull request. CD adds deployment stages: build a production artifact (Docker image, JAR, binary), push to a registry, and deploy using a strategy (Blue-Green: switch traffic between two identical environments; Canary: gradually roll out to a percentage of users; Rolling: replace instances one by one). Feature flags decouple deployment from feature release.

CI/CD Pipeline

Definition: A CI/CD Pipeline is the automated sequence of stages that software code passes through — from the moment a developer commits a change to the moment that change is running in production. It is the assembly line of modern software development: a structured, repeatable process that enforces quality gates at every stage and moves code forward automatically when each gate is passed.

A well-designed CI/CD pipeline is the most important infrastructure investment a software team can make: it compresses release cycles from months to hours, catches bugs before they reach customers, and creates a documented, auditable record of every change deployed to production.

Technical Insight: Pipelines are defined as code (YAML in GitHub Actions, GitLab CI, or Jenkinsfile in Jenkins), version-controlled alongside the application code. Pipeline stages are parallelized where possible to minimize total duration. Advanced patterns include: Matrix Builds (running the same tests across multiple language versions or OS combinations simultaneously), Artifact Promotion (the same immutable Docker image is promoted from dev to staging to production, never rebuilt), Environment-Specific Config Injection (secrets and configs injected at deploy time via Vault or AWS Secrets Manager), and Pipeline as Code with DRY principles using reusable workflow templates.

Infrastructure as Code (IaC)

Definition: Infrastructure as Code (IaC) is the practice of managing and provisioning computing infrastructure — servers, networks, databases, load balancers, DNS records — through machine-readable configuration files rather than manual processes or interactive GUIs. Instead of a system administrator clicking through a cloud console to spin up servers, IaC defines the desired infrastructure state in code files that are version-controlled, reviewed, tested, and applied automatically.

IaC brings the best practices of software engineering to infrastructure: version control (every change is tracked in Git), code review (infrastructure changes go through pull requests), automated testing, and the ability to recreate any environment identically in minutes — eliminating 'it works on my machine' problems between environments.

Technical Insight: IaC tools fall into two categories: Declarative (you describe the desired end state, the tool figures out how to get there — e.g., Terraform, AWS CloudFormation, Pulumi) and Imperative (you write scripts describing the steps to take — e.g., Ansible, Chef, Puppet). Terraform is the dominant multi-cloud IaC tool: it uses HCL (HashiCorp Configuration Language) to define resources, maintains a state file tracking current infrastructure, and plans changes before applying them (terraform plan / terraform apply). Best practices include remote state storage (S3 + DynamoDB locking), modular code structure, and integrating IaC into CI/CD pipelines for automated infrastructure deployment.

Terraform

Definition: Terraform is an open-source Infrastructure as Code (IaC) tool created by HashiCorp that enables engineers to define, provision, and manage cloud infrastructure across any provider — AWS, Azure, GCP, Kubernetes, and hundreds of others — using a single, unified configuration language (HCL: HashiCorp Configuration Language). It has become the industry-standard tool for cloud infrastructure automation, used by the majority of organizations running production workloads on public cloud.

With Terraform, spinning up a complete, production-ready cloud environment — including VPCs, subnets, load balancers, databases, and application clusters — takes minutes from a single command, and the entire setup is reproducible, documented, and version-controlled in Git.

Technical Insight: Terraform operates through a core workflow: Write (define infrastructure in .tf files using HCL), Plan (terraform plan generates an execution plan showing exactly what will be created, changed, or destroyed — with no actual changes made), Apply (terraform apply executes the plan, provisioning real infrastructure and updating the state file). The state file is the source of truth mapping Terraform config to real-world resources — stored remotely in S3 or Terraform Cloud for team collaboration with state locking via DynamoDB. The Terraform Registry provides thousands of verified provider plugins and reusable modules. Terragrunt extends Terraform for managing large, multi-account, multi-region infrastructure at scale.

DevOps Practices & CI/CD: The Complete Software Delivery Glossary

%20(1).webp)