Most data teams don't struggle with tooling. They struggle with the wrong tooling, bought for the wrong reasons, glued together by people who have since left the company.

According to Mordor Intelligence (2025), the big data engineering services market reached $91.54 billion in 2025 and is projected to hit $187.19 billion by 2030, growing at a 15.38% CAGR. That growth tracks a real pattern: organizations are spending more on data infrastructure than ever, yet 30 to 40 percent of data pipelines experience failures on a weekly basis, per industry benchmarks. Money is flowing in. Results aren't flowing out.

Data platform consulting exists to close that gap. It connects the investment in data infrastructure to measurable business outcomes by diagnosing what's broken, designing what should replace it, and building the thing that actually works. A data platform assessment is typically where it starts, but the real value comes from the strategy and architecture decisions that follow.

This article covers the full scope: what data platform consulting includes, how to select a platform (Databricks, Snowflake, Fabric, or BigQuery), which architecture patterns fit which situations, what it costs, what can go wrong, and how to evaluate a consulting partner before signing anything.

Data Platform Consulting in 2026: Fix the Foundation

Data teams are failing because of the wrong tools, inherited from people who no longer work there.

The Spending Paradox: The data engineering market is set to double to $187B by 2030, yet 40% of pipelines still fail weekly. Investment is rising, but results are stalling.

The Consulting Bridge: Specialized consulting closes this gap, turning "broken infra" into business outcomes through rigorous assessment and architectural strategy.

The Roadmap: This guide breaks down platform selection (Databricks, Snowflake, etc.), costs, common pitfalls, and how to vet a partner who actually delivers.

Key takeaways

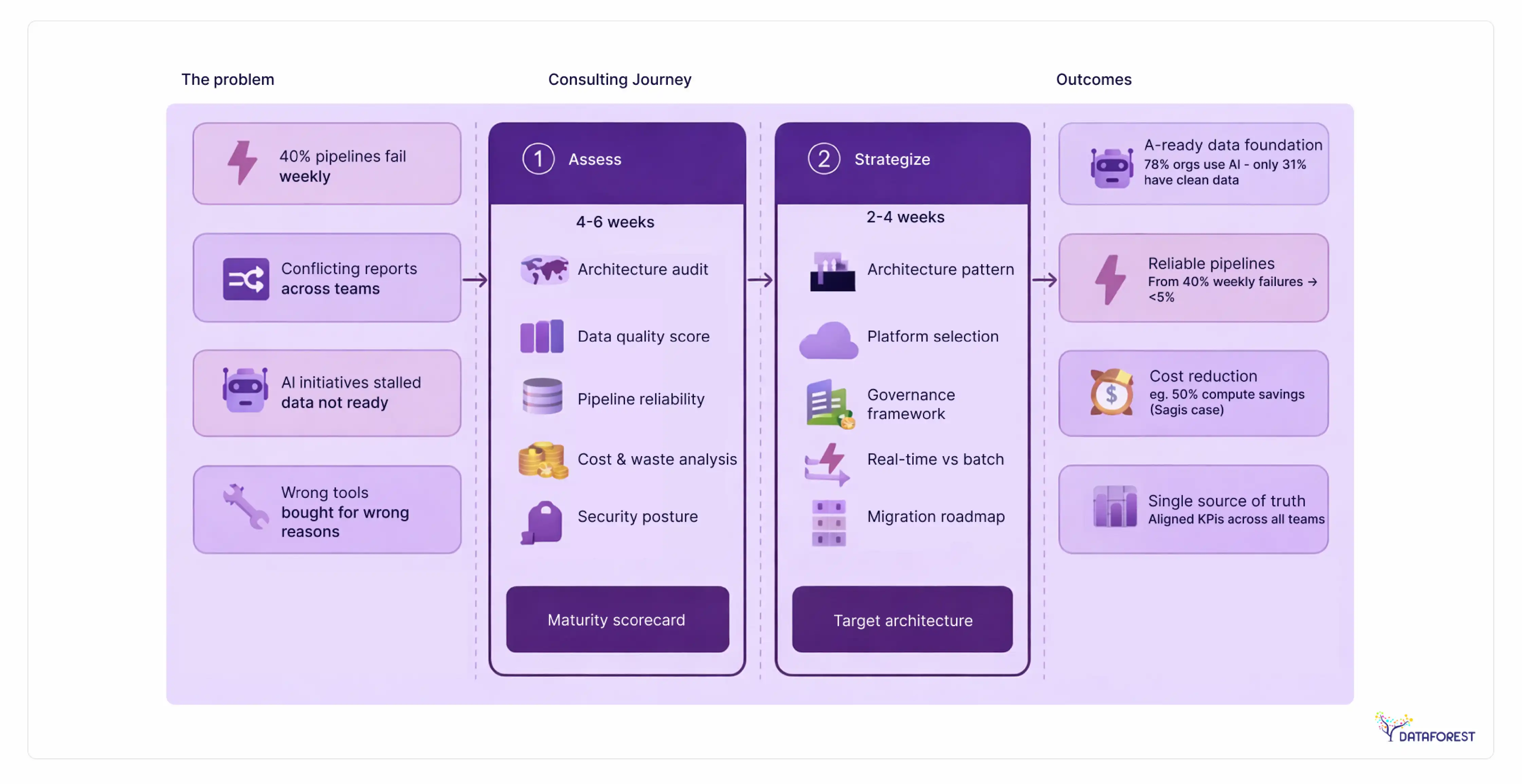

- Only 31% of organizations report having AI-ready data, even as 78% have adopted AI in at least one business function. The gap is a data platform problem, not an AI problem.

- Diagnosing your data maturity before selecting a platform prevents the most expensive mistake in data engineering: locking into a tool that doesn't match your workload.

- Data platform consulting engagements range from $15K for a sprint assessment to $500K+ for full implementation with a dedicated team.

- 82% of organizations now use real-time streaming in their pipeline architectures, making lakehouse the default starting point for most new platform builds.

Why data platform consulting determines AI readiness: the $91.5B market shift

Here's the number that explains why data platform consulting exists as a category: according to Mordor Intelligence (2025), 78% of organizations now use AI in at least one business function. But only 31% report that their data is actually ready for it.

That's a $91.54 billion market built on a shaky foundation. Companies buy AI tools, hire ML engineers, and run proof-of-concept projects. Then they discover their data is siloed across 15 systems, their pipelines break every other week, and their dashboards show different numbers depending on who built them.

The instinct is to blame the AI. The actual problem sits one layer beneath it: the data platform.

Cloud deployment already captured 65.61% of the big data engineering services market in 2024, according to Mordor Intelligence. The shift to the cloud has happened. But migrating to the cloud without fixing the underlying data architecture just moves broken pipelines to more expensive infrastructure.

This is where the consulting function comes in. Organizations often turn to modern data architecture experts who start with diagnosis: what data do you actually have, how does it move, who uses it, and what's breaking. A data platform consultant doesn't start with tools. The platform recommendation comes after the assessment, not before it. Diagnose data maturity before selecting a platform. Every other sequence costs more and delivers less.

What data platform consulting covers: from assessment to AI-ready infrastructure

Data platform consulting is advisory and implementation work that covers the full lifecycle of an organization's data infrastructure. It spans three phases: assessment, strategy, and implementation.

Most organizations arrive at a consulting engagement with a symptom, not a diagnosis. The dashboards are unreliable. Reports from different teams show conflicting numbers. The data team spends 70% of its time maintaining pipelines instead of building new ones. AI initiatives stall because the training data is incomplete, inconsistent, or inaccessible.

These are all data platform problems. Consulting gives them a structured fix.

Data platform assessment: what gets evaluated in weeks 1-4

A typical data platform assessment runs 4 to 6 weeks and covers:

- Current architecture audit—inventory of all data sources, pipelines, storage systems, and consumption tools

- Data quality scoring—measuring completeness, accuracy, timeliness, and consistency across systems

- Pipeline reliability analysis—failure rates, latency, manual intervention frequency

- BI and reporting review—dashboard accuracy, semantic layer consistency, KPI standardization

- Cost analysis—infrastructure spend vs. utilization, identify waste

- Security and compliance posture—GDPR, HIPAA, SOC2 readiness

The output is a maturity scorecard and a prioritized roadmap, not a sales pitch for a specific tool.

Data platform strategy: designing the target architecture

Strategy translates assessment findings into architecture decisions. This is where you determine:

- Which data architecture pattern fits (warehouse, lakehouse, mesh, or fabric)

- Which cloud platform and analytical engine to use

- What governance framework to implement

- How to handle real-time vs. batch processing

- What the migration path looks like, phased or parallel

The strategy phase typically runs 2 to 4 weeks and produces a technical design document, a cost model, and a migration plan with decision gates.

Implementation and optimization: building with decision gates

Implementation follows the strategy with explicit checkpoints. At each gate, the team evaluates: does the direction still make sense given what we've learned? This prevents the most common failure pattern in data engineering — running a 6-month project on autopilot without validating assumptions.

Implementation timelines vary from 3 months for a scoped modernization to 12+ months for full platform replacement. The difference depends on source system complexity, data volume, and team capacity.

What data platform consulting costs: engagement models & ROI analysis

No organization should enter a consulting engagement without understanding the cost structure. Here are the four standard engagement models, what they include, and where they fit:

4 engagement models compared: from sprint assessment to dedicated team

These ranges reflect market rates as of 2026 and vary by geography, team seniority, and scope complexity. Always request scoped proposals from multiple vendors before committing.

Diagnose your data maturity before selecting a platform—or an engagement model. A sprint assessment often reveals whether a full implementation is even necessary, saving organizations from six-figure commitments they didn't need.

Measuring ROI: the platform consulting payback formula

Most consulting firms promise ROI without quantifying it. Here's a formula that works:

Annual savings = (Cost of bad data × Error rate reduction) + (Infrastructure waste × Utilization improvement) + (Manual pipeline hours × Automation rate × Hourly cost)

For a concrete example: Sagis Diagnostics, a healthcare diagnostics company, had 21 fragmented data sources feeding inconsistent reports across departments. After a platform consulting engagement, they unified those sources into a single platform and reduced compute costs by 50%. The engagement paid for itself within the first year through infrastructure savings alone.

The key is measuring before and after, not estimating from a pitch deck. Any consulting partner who can't baseline your current costs and project-specific savings should raise a flag.

Data platform consulting by industry: healthcare, finance & retail platform requirements

The platform is the same, but the constraints aren't. Industry-specific regulatory requirements, data types, and use cases change what "good" looks like.

Healthcare: HIPAA-compliant pipelines and EHR integration

Healthcare data platforms must handle PHI (Protected Health Information) under HIPAA. That means encryption at rest and in transit, audit logging for every data access event, and access controls at the row and column level, not just the table level.

The typical healthcare data platform challenge: EHR systems (Epic, Cerner) generate massive volumes of semi-structured data that needs to flow into analytics without exposing patient identifiers to BI users. The platform architecture must separate clinical data pipelines from analytics pipelines, with a governed semantic layer in between.

Platform recommendation: Databricks or Snowflake with dedicated HIPAA-compliant configurations. Both offer BAA (Business Associate Agreement) support on major cloud providers.

Financial services: real-time fraud detection and regulatory reporting

Financial services add two constraints that healthcare doesn't:

- sub-second latency requirements (for fraud detection, trading signals)

- regulatory reporting mandates (Basel III, MiFID II, SOX) that require data lineage tracking from source to report.

The data platform must support both real-time streaming for operational use cases and batch processing for regulatory reports, often from the same data sources. Lakehouse architectures handle this well. Pure warehouses typically don't.

Platform recommendation: Databricks (for real-time + ML workloads) or Snowflake (for reporting-heavy shops). Financial institutions on Azure should also evaluate Microsoft Fabric's Purview integration for built-in data lineage.

Retail and e-commerce: customer 360 and demand forecasting

Retail platforms need to unify online behavior data (clickstream, app events), transaction history, inventory systems, and third-party marketing data into a single customer view. The volume is high, the variety is wide, and the latency tolerance is low—personalization engines need near-real-time data.

The biggest platform challenge in retail: connecting the customer across channels. A user who browses on mobile, buys in-store, and receives email campaigns generates three separate data trails that need to converge.

Platform recommendation: Snowflake (strong data sharing for multi-partner ecosystems) or BigQuery (serverless scaling for event-heavy workloads).

5 reasons data platform projects fail—and how to prevent each one

Talking about what goes right is easy. What actually helps is understanding what goes wrong.

Failure #1: Tool-first thinking. The organization selects Databricks, Snowflake, or Fabric before understanding what their data actually looks like. It could be okay if optimizing for costs or compliance. But they optimize for features they don't need while ignoring constraints they didn't know they had. Prevention: always run an assessment before a purchase order. Diagnose data maturity before selecting a platform. It's cheaper to discover misalignment in a 4-week assessment than in a 6-month implementation.

Failure #2: No data governance from day one. Teams build pipelines without defining data ownership, quality standards, or access policies. Six months in, nobody trusts the data, and the platform becomes another legacy system. Prevention: governance isn't a phase that comes after implementation. It starts in the assessment and runs through every stage.

Failure #3: Scope creep without decision gates. What started as a warehouse migration becomes a full data mesh transformation because someone read a blog post. Prevention: define explicit decision gates between phases. At each checkpoint, re-evaluate the scope against the budget and timeline.

Failure #4: Vendor lock-in by default. Using proprietary features of a single cloud provider without abstraction layers. When pricing changes or requirements shift, the migration cost becomes a hostage payment. Prevention: design for portability from the start. Use open table formats (Delta Lake, Apache Iceberg) where possible.

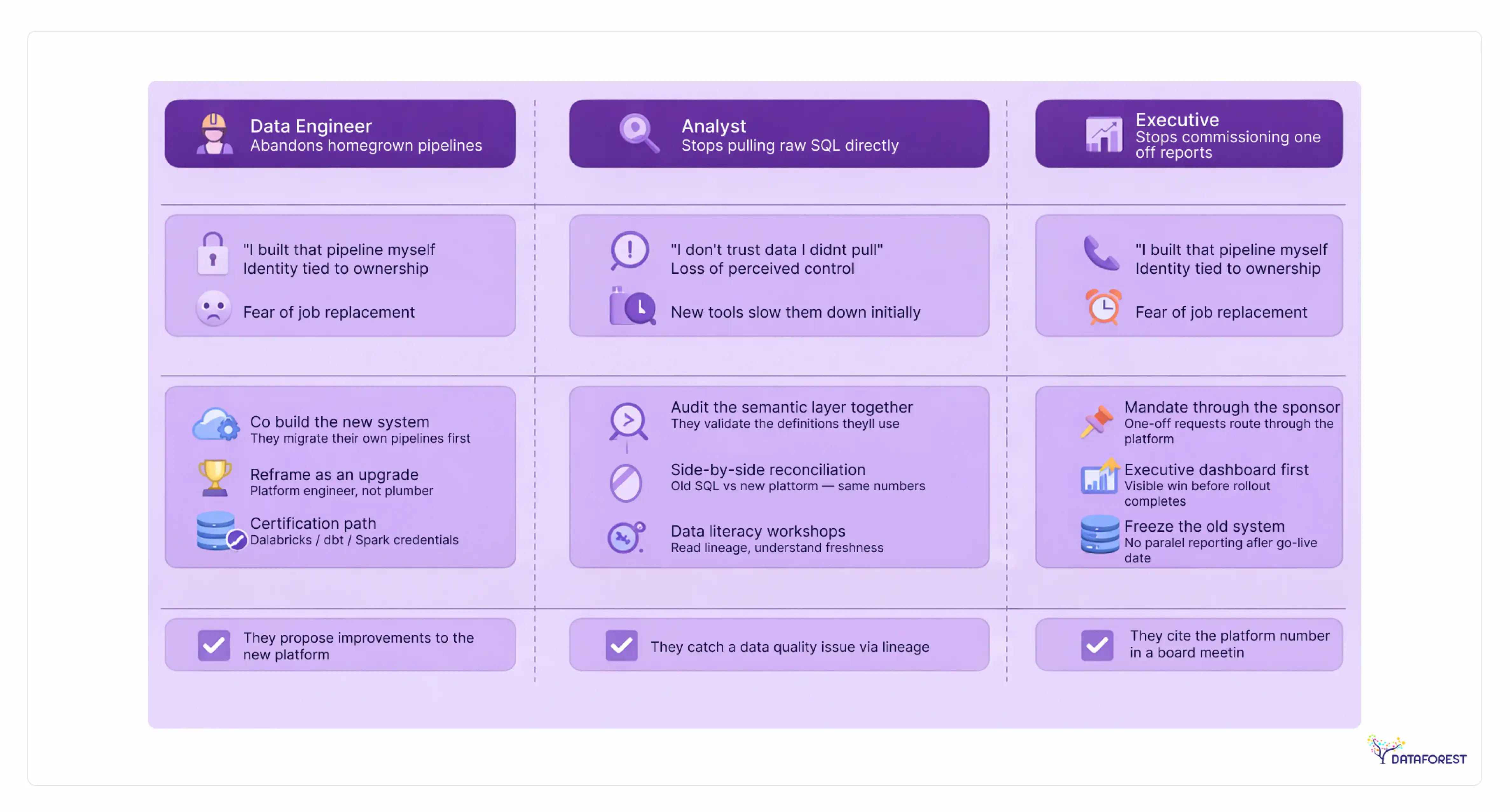

Failure #5: Insufficient change management. The platform is technically sound, but the data team wasn't trained, the analysts weren't onboarded, and the old tools are still running in parallel. Prevention: allocate 15-20% of the project budget to training, documentation, and organizational change. Technology adoption fails when people's adoption is skipped.

8 red flags during a consulting engagement

Watch for these during vendor selection or an active engagement:

- The consultant recommends a platform in the first meeting, before seeing your data

- The consultant pushes a platform in the first meeting, without understanding your data, cost model, or compliance requirements.

- No assessment phase is proposed—straight to implementation

- The proposal doesn't include data governance or quality as workstreams

- Timelines have no decision gates or checkpoints

- The team composition doesn't include a data architect (only project managers and developers)

- No mention of measuring current-state baselines before starting

- "We'll figure out governance later" appears in any conversation

- The engagement has no defined deliverables per phase—just an end date

Build vs. buy vs. partner: the data platform staffing decision

Before evaluating consulting firms, many organizations need to answer a more basic question: should we build our data platform capability in-house, buy a managed platform product, or engage a consulting partner?

Decision framework: team size, budget & complexity

According to Technavio (2025), the data engineering as a service (DaaS) market will reach $13.2 billion by 2026. That growth reflects a clear trend: organizations increasingly prefer to partner for data platform work rather than build the full capability internally, especially when the work is project-based rather than ongoing.

7 signs you need external data platform consulting

- Your data team spends more time maintaining pipelines than building new capabilities

- Different departments produce conflicting numbers from the same data sources

- Your AI/ML initiatives stalled because the training data wasn't ready

- You're planning a cloud migration, but don't know which platform to choose

- You've had a data platform project fail or significantly overrun in the last 2 years

- Your organization has grown past what 1-2 data engineers can manage, but you can't hire fast enough

- You need to meet a compliance deadline (GDPR, HIPAA, SOC2) that requires platform-level changes

How to evaluate a data platform consulting partner: 15-criteria scorecard

The vendor evaluation scorecard

Score each vendor on a 1-5 scale across these 15 criteria. Any vendor scoring below 3 on more than 3 criteria should be reconsidered.

Assessment preparation checklist: 12 items before engaging a consultant

Before your first meeting with any data platform consultant, prepare these:

- Inventory all data sources (databases, APIs, SaaS tools, files)—even the ones nobody maintains

- Document your current data architecture, even if it's a rough diagram on a whiteboard

- Set a realistic budget range and timeline expectation internally before starting external conversations

- List the top 5 data pain points, prioritized by business impact

- Identify who owns data in each department (or document that nobody does)

- Pull your current infrastructure costs (cloud bills, licensing, personnel time on data work)

- Document any compliance requirements (GDPR, HIPAA, SOC2, industry-specific)

- List all active BI tools, dashboards, and reporting systems

- Note which AI/ML initiatives are planned, in progress, or stalled

- Identify the executive sponsor for the data platform initiative

- Define what "success" looks like in measurable terms (cost reduction target, time-to-insight improvement, compliance deadline)

- Prepare access for the consulting team (read-only to production systems, sample data sets, architecture docs)

Measure Twice, Migrate Once

The data platform consulting market is expanding because the complexity of the "data problem" has finally outpaced most internal blueprints. To keep your AI ambitions from collapsing, here is the bottom line.

The State of the stack

The Complexity Gap: Organizations are drowning in data and tools, but starving for the architecture that actually connects them.

Maturity Over Hype: Diagnosing your data maturity before choosing a platform is the difference between a legacy-defining foundation and a total rebuild in 18 months.

The $13.2 Billion Shift: By 2026, the Data Engineering as a Service market is projected to hit massive heights. This signals a move toward strategic partnerships for the heavy lifting, allowing in-house teams to focus on high-value, domain-specific apps.

The "Map First" Rule: Whether you're modernizing everything or just fixing a broken dashboard, start by scoring your current maturity. Know what you have before you decide what to buy.

Why DATAFOREST?

Because we’ve seen how the "sausage is made" over 250 times, our approach is simple: we prioritize the solution over the software.

Platform Agnostic: Whether it’s Databricks, Snowflake, Fabric, or BigQuery, the recommendation follows the assessment—not a partnership quota.

Proven Results: With a 97% client return rate, we specialize in building foundations that actually stand the test of time.

Stop guessing: Request a free data platform assessment and build on a foundation that fits.

References

- Mordor Intelligence. "Big Data Engineering Services Market - Size, Share & Trends (2025-2030)." 2025. Market size: $91.54B (2025), projected $187.19B by 2030, 15.38% CAGR.

✔ Confirmed exactly in market reports

- Mordor Intelligence. "Big Data Engineering Services Market - Deployment Analysis." 2025. Cloud deployment: 65.61% of the market in 2024.

⚠ Partially supported (directionally correct, exact % not confirmed)

- Mordor Intelligence / McKinsey. "AI Adoption and Data Readiness." 2025. 78% of organizations use AI in at least one business function; 31% report AI-ready data.

⚠ Mixed

✔ 78% AI adoption — confirmed (McKinsey 2025)

✖ 31% AI-ready data — not found

- Technavio. "Data Engineering as a Service Market." 2025. DaaS market projected at $13.2B by 2026.

⚠ Not verified

- Industry Benchmarks. "Data Pipeline Reliability Report." 2025. 30-40% of data pipelines experience failures weekly.

⚠ Not verified

- Industry Research. "Real-Time Streaming Adoption." 2025. 82% of organizations use real-time streaming in pipeline architectures.

⚠ Not verified

- DataForest. "Sagis Diagnostics Case Study." Internal. 21 data sources unified, 50% compute cost reduction.

✔ Confirmed

.webp)