Data Platform Development Services: AI-Ready, Scalable, Built to Perform

DATAFOREST engineers have shipped data platforms across healthcare, finance, retail, and manufacturing — delivering infrastructure that's production-ready from day one.

97% of our clients come back for new projects.

PARTNER

PARTNER

FEATURED IN

Stalled AI initiatives

Data scientists spend 80% of their time preparing data because the platform wasn't built for ML workflows. Models can't reach production. The enterprise big data platform market is projected to reach $250 billion by 2033—yet organizations without AI-ready infrastructure will fall further behind every quarter.

Runaway platform costs

Legacy platforms that weren't designed for elastic cloud usage cost 3–5× more than they should. If you're still running on-premise warehouses or over-provisioned cloud instances, you're funding infrastructure debt instead of innovation.

Data platform sprawl

Without a unified data platform, every team builds its own workarounds, governance breaks down, and integration costs compound.

Data Platform Development Services That Deliver Measurable Outcomes

DATAFOREST builds enterprise data platforms across seven core capabilities—each designed to move you from fragmented, underperforming infrastructure to a unified, AI-ready data foundation.

01

Platform Strategy & Roadmap

02

Data Lakehouse & Warehouse Implementation

03

Real-Time Data Platform Engineering

04

Cloud Platform Migration & Modernization

05

AI/ML Platform Infrastructure

06

Data Governance, Security & Compliance

07

FinOps & Platform Cost Optimization

Databricks vs. Snowflake vs. BigQuery: Choosing the Right Data Platform

Dimension

Databricks

Snowflake

Google BigQuery

Core strength

Unified lakehouse for analytics + ML

Cloud data warehouse with cross-cloud governance

Serverless warehouse with native Google AI

Best for

Organizations combining analytics, ML, and data engineering on one platform

Enterprises needing high-concurrency analytics and secure data sharing

Teams requiring fast SQL analytics with minimal infrastructure management

AI/ML readiness

Native—MLflow, Mosaic AI, feature stores built in

Growing—Cortex AI, ML functions, but requires external tooling for deep ML

Strong—BigQuery ML, Vertex AI integration, LLM functions at row level

Scalability model

Compute + storage separated, cluster-based

Compute + storage separated, multi-cluster warehouses (up to 300 clusters)

Fully serverless, auto-scaling

Governance

Unity Catalog—centralized access, lineage, quality

Horizon—cross-cloud governance, object tagging

Dataplex—metadata management, data quality

Cost model

DBU-based, committed, or pay-as-you-go

Credit-based, auto-suspend for idle warehouses

On-demand per TB scanned or flat-rate slots

Market momentum

$5B ARR, 55% YoY growth (Series L, Dec 2025)

$3.8B revenue, 27% YoY growth (Q3 FY26)

Growing within the GCP ecosystem, serverless adoption is rising

When to combine patterns: Most enterprise data platforms use elements of multiple platforms. A Databricks lakehouse as the analytical and ML core with Snowflake for high-concurrency BI workloads and BigQuery for Google-ecosystem analytics is increasingly common. We'll help you design the right platform architecture for your data maturity and workload requirements.

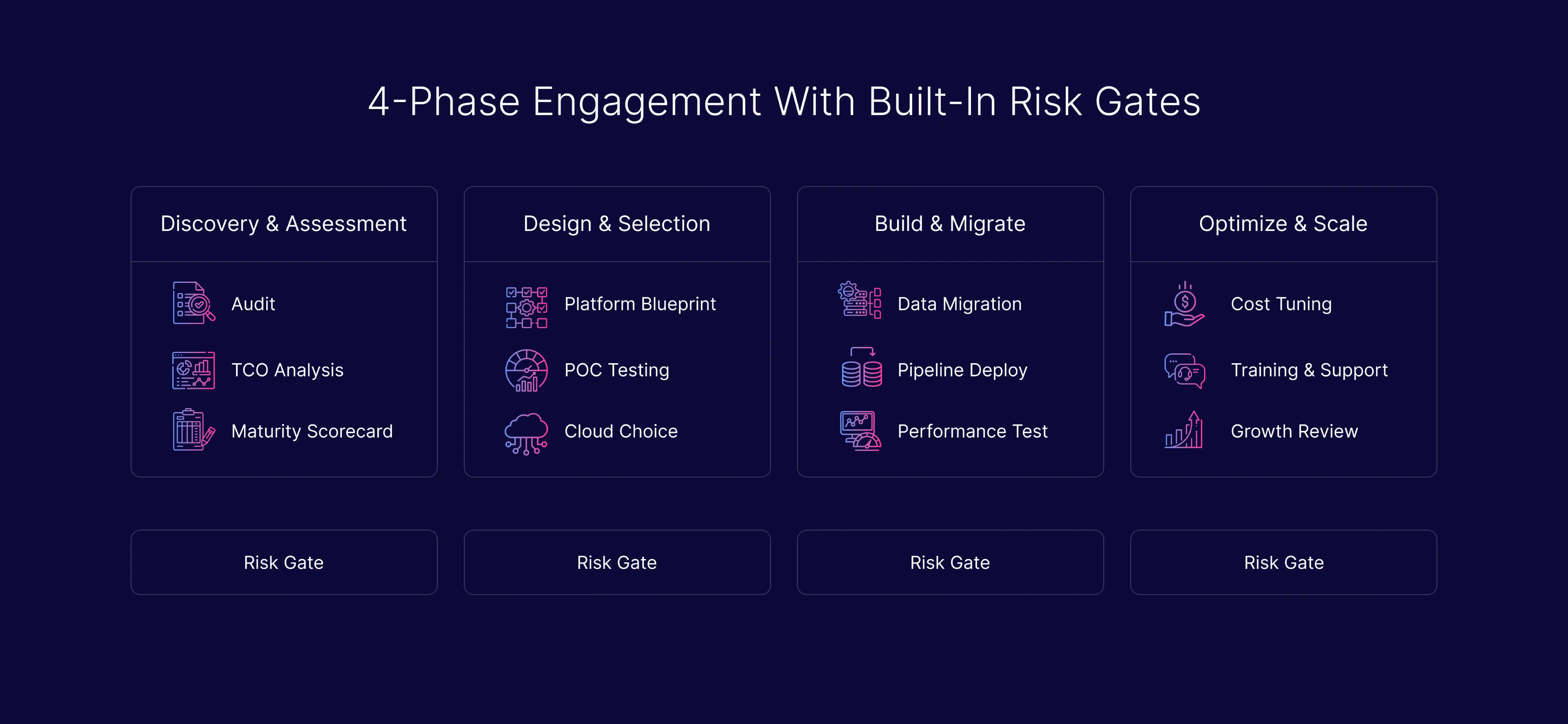

The Proof-to-Production Roadmap

Every phase has defined deliverables, validation checkpoints, and rollback protocols. You never move forward until the current phase is verified.

.svg)

Phase 1: Discovery & Platform Assessment (Weeks 1–2)

Audit your current data landscape — platforms, pipelines, storage, governance gaps, and integration bottlenecks. Build a TCO model comparing your current costs to projected modern platform costs.

Deliverables: platform maturity scorecard, data source inventory, technology evaluation matrix, risk register, and a TCO comparison framework that gives your CFO the business case.

A typical Phase 1 team includes a Data Engineer and a Project Manager. Depending on the scope, we may also involve specialists such as a DevOps engineer, analytics expert, or other experts needed for the assessment.

Deliverables: platform maturity scorecard, data source inventory, technology evaluation matrix, risk register, and a TCO comparison framework that gives your CFO the business case.

A typical Phase 1 team includes a Data Engineer and a Project Manager. Depending on the scope, we may also involve specialists such as a DevOps engineer, analytics expert, or other experts needed for the assessment.

01

Phase 2: Platform Design & Technology Selection (Weeks 3–5)

Select the right platform and architecture pattern (lakehouse, warehouse, hybrid), choose cloud providers, design governance, and map migration sequencing. We use a proof-of-concept to validate the signal before committing to full rollout.

Deliverables: Target platform architecture blueprint, technology selection rationale with vendor comparison, governance framework, migration sequence with rollback checkpoints, and PoC validation results.

Before taking any action, we design rollback strategies and zero-downtime migration approaches for systems where downtime is not an option.

Deliverables: Target platform architecture blueprint, technology selection rationale with vendor comparison, governance framework, migration sequence with rollback checkpoints, and PoC validation results.

Before taking any action, we design rollback strategies and zero-downtime migration approaches for systems where downtime is not an option.

02

Phase 3: Build, Validate & Migrate (Weeks 6–14)

Phased migration with validation at each checkpoint. No big-bang cutover. Each data domain migrates independently with its own rollback gate. Pipeline engineering, schema deployment, access management, and integration testing happen in parallel streams.

Deliverables: Production-ready platform, migrated data domains, automated testing suites, performance benchmarks vs. legacy baselines.

Deliverables: Production-ready platform, migrated data domains, automated testing suites, performance benchmarks vs. legacy baselines.

03

.svg)

Phase 4: Optimize, Enable & Scale (Ongoing)

FinOps tuning, performance monitoring, schema evolution, and team enablement. We don't hand you a platform and disappear—97% of our clients return because we build partnerships, not projects.

Deliverables: Cost optimization reports, performance dashboards, runbooks, team training, and quarterly platform reviews.

Timeline benchmarks: Companies typically complete an initial pilot in approximately 12 weeks. A full enterprise platform migration takes 6–12 months, depending on scope and complexity. DATAFOREST moves from validation to production 4–6 months faster than the industry average.

Deliverables: Cost optimization reports, performance dashboards, runbooks, team training, and quarterly platform reviews.

Timeline benchmarks: Companies typically complete an initial pilot in approximately 12 weeks. A full enterprise platform migration takes 6–12 months, depending on scope and complexity. DATAFOREST moves from validation to production 4–6 months faster than the industry average.

04

Data Platforms Built for Your Industry

We've delivered more than 50 industry-specific data solutions. Each vertical has distinct compliance requirements, data patterns, and performance demands.

Financial Services

GDPR, PSD2, and AML compliance frameworks with real-time transaction monitoring. Platform architecture designed for regulatory audit trails, fraud detection pipelines, and sub-second risk scoring.

Retail & E-Commerce

ML-driven recommendation systems, scalable user interaction data platforms, and real-time inventory pipelines. Platform architecture handles seasonal traffic spikes without cost overruns. The top five cloud data warehouse vendors control approximately 65% of cloud revenues—we help retailers choose the right combination.

Healthcare

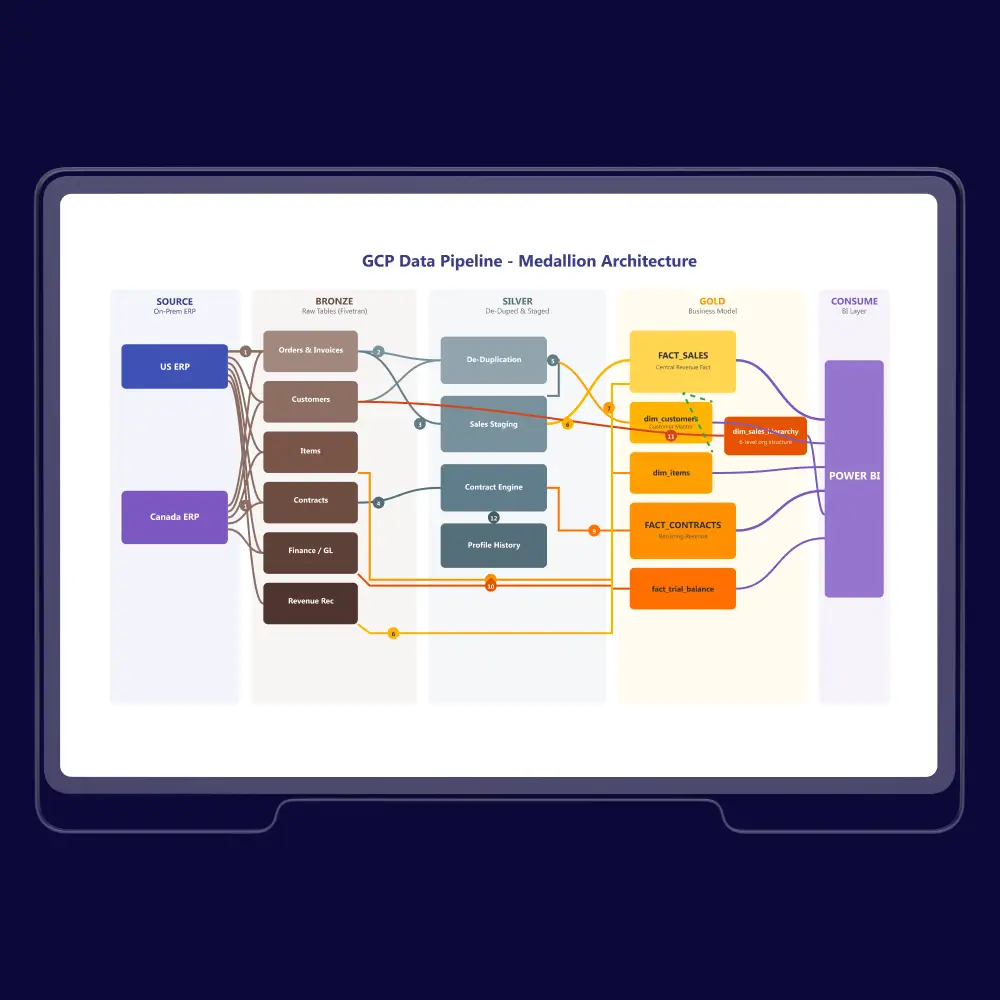

HIPAA-compliant platforms with encryption, anonymization, and secure data integration. Our Sagis Diagnostics migration proves this in practice—21 data sources unified on a HIPAA-compliant Databricks Lakehouse with Medallion Architecture.

Manufacturing & IoT

IoT sensor data collection and analysis across production lines. Predictive maintenance pipelines that process high-frequency time-series data at scale. Platform infrastructure handles edge-to-cloud data flows with latency requirements measured in milliseconds.

Turn Fragmented Data Into a Scalable Growth Asset

Siloed systems, unreliable reporting, and rising infrastructure costs slow every decision. Build a modern data platform that connects your systems, improves trust in data, and supports faster executive action.

How DATAFOREST Compares: Platform Engineers vs. Consultants

Dimension

Traditional Consultancies

Generic Dev Shops

DATAFOREST

Experience

Varies by engagement

Limited data platform specialization

18 years—250+ data platform implementations

Platform expertise

Vendor-agnostic but shallow

Single-platform bias

Deep expertise across Databricks, Snowflake, BigQuery—Official Databricks Consulting Partner

Methodology

Generic frameworks

Ad hoc

Rollback strategies and zero-downtime migration approaches for systems where downtime is not an option.

Risk mitigation

Strategy decks, not delivery

Not addressed

Phased migration with validation checkpoints at every gate

Cost transparency

Opaque retainers

Time & materials

TCO modeling in Phase 1: 25–35% cloud cost reduction track record

AI readiness

Strategy decks

Basic integration

Production ML pipelines · Databricks Consulting Partner · Feature store + model registry implementation

Post-launch

Handoff and exit

Break-fix support

Ongoing optimization—97% client return rate

Proof

Logo walls

Generic testimonials

Named case studies with quantified before/after metrics

Why You Can Trust Us

Technology Partnerships:

Compliance Capabilities:

Recognition:

Get Your Data Platform Assessment

Data Platforms Built by Engineers Who've Shipped 250+ Data Systems—AI-Ready, Cost-Optimized, and Scalable from Day One.

Stop paying the cost of fragmented, underperforming data infrastructure. Start with a discovery assessment that gives you a platform maturity scorecard, risk register, and TCO comparison.

Stop paying the cost of fragmented, underperforming data infrastructure. Start with a discovery assessment that gives you a platform maturity scorecard, risk register, and TCO comparison.

92%

client return rate

250+

successful implementations

Databricks

Consulting Partner

Related articles

All publicationsFAQ — Data Platform Development Services

How much does data platform development cost?

Cost depends on scope, data complexity, number of source systems, and target platform architecture. DATAFOREST offers a pricing calculator for initial estimates. Typical engagements range from focused pilots starting around $50K–$100K to enterprise-wide platform implementations from $250K–$1M+. We build a TCO model in Phase 1 so you can see projected costs versus what your current infrastructure costs annually—giving your CFO a clear business case with 12-month and 36-month projections.

Key cost factors include: number of data sources to integrate, real-time versus batch processing requirements, compliance needs (HIPAA and SOC 2 add governance layers), team augmentation versus full project delivery, and ongoing managed services. Our Sagis Diagnostics engagement, for example, achieved approximately 50% compute cost reduction — meaning the platform investment paid for itself within the first year of operation.

Key cost factors include: number of data sources to integrate, real-time versus batch processing requirements, compliance needs (HIPAA and SOC 2 add governance layers), team augmentation versus full project delivery, and ongoing managed services. Our Sagis Diagnostics engagement, for example, achieved approximately 50% compute cost reduction — meaning the platform investment paid for itself within the first year of operation.

How long does a typical data platform implementation take?

Initial pilots reach production in approximately 12 weeks. Full enterprise platform implementations take 6–12 months on average, depending on the number of data sources, compliance requirements, and migration complexity. DATAFOREST moves from validation to production 4–6 months faster than the industry average through our phased approach with parallel workstreams.

What if we don't need a full platform rebuild?

Sometimes the right answer is not to rebuild everything. We assess your platform maturity first and recommend the minimum intervention that achieves your outcomes. That might be optimizing existing pipelines, adding a streaming layer, modernizing one data domain at a time, or implementing better governance on your current platform. We call this "progressive modernization"—you get immediate value without the risk of a full platform replacement.

Which data platform should we use—Databricks, Snowflake, or BigQuery?

We're platform-experienced but outcome-driven. As an Official Databricks Consulting Partner, we have deep expertise in the lakehouse architecture—and we also implement Snowflake and BigQuery based on workload requirements. Platform selection happens in Phase 2 based on your existing infrastructure, team skills, workload types, and cost profile—not vendor preference. See our comparison table above for a detailed breakdown of when each platform fits best.

How do you handle zero-downtime migration to a new data platform?

Phased migration with parallel running. Each data domain migrates independently with its own rollback gate. We validate data integrity at every checkpoint before cutting over. Legacy systems stay live until the modern platform proves stable under production load. This approach eliminates the "big bang" risk that causes the majority of data migration failures.

What's the difference between a data platform and a data warehouse?

A data warehouse is one component of a data platform. A data warehouse stores structured data optimized for analytical queries—it's a single technology layer. A data platform is the complete ecosystem: ingestion pipelines, storage (lakehouse, warehouse, or both), processing engines, governance, ML infrastructure, and delivery. Think of it this way: Snowflake is a data warehouse. An enterprise data platform built on Snowflake, Kafka, dbt, Airflow, plus a feature store, is a data platform. We build the full platform, not just the warehouse.

How do you handle governance and compliance on the platform?

Governance is built into the platform architecture from day one — not bolted on after launch. We implement PII handling, data lineage tracking (who accessed what data, when, and how it was transformed), access controls, and compliance frameworks for GDPR, HIPAA, SOC 2, and PCI-DSS. With 140+ countries now enforcing data privacy laws, retroactive compliance costs 3–5× more than building it in. Our platforms deliver 95%+ data quality SLAs and 80% reduction in data-related incidents versus ungoverned environments.

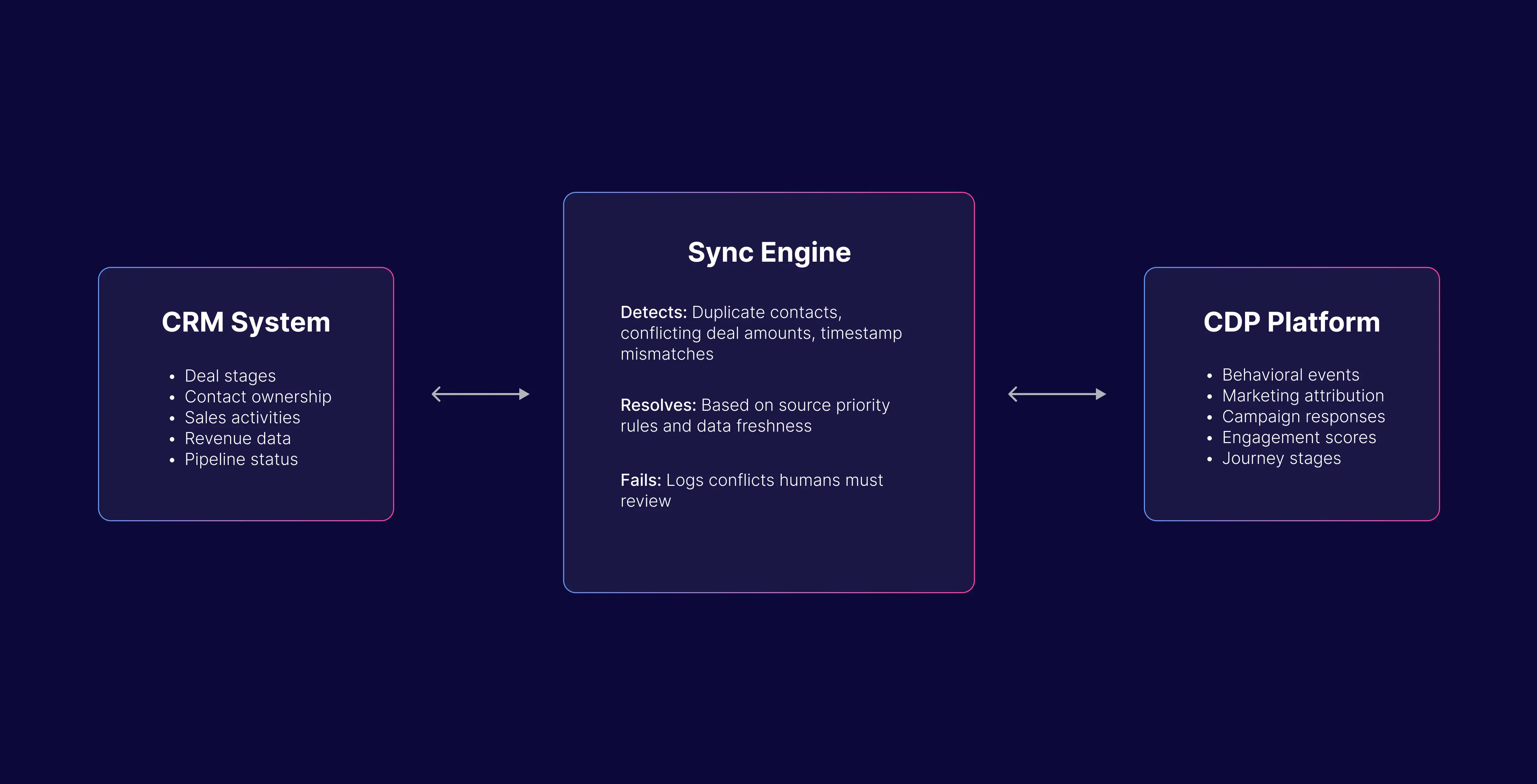

Can you integrate our existing tools and data sources?

Yes. Most enterprise platform builds involve integrating dozens of existing systems — CRMs, ERPs, SaaS applications, legacy databases, IoT streams, and third-party APIs. We support integration through Apache Kafka for streaming, dbt for transformation, Airflow/Dagster for orchestration, and native connectors for platforms like Databricks, Snowflake, and BigQuery. Our Sagis Diagnostics project unified 21 separate data sources into a single governed platform — integration complexity is what we specialize in.

What team will we work with?

You’ll work with an experienced delivery team aligned with your project scope and complexity. Every engagement includes an experienced data engineer and a dedicated Project Manager to ensure smooth execution, clear communication, and steady progress. Depending on your needs, we can also bring in additional specialists such as a DevOps engineer, analytics expert, data scientist, or other experts required for the project.

How do you measure platform success?

KPIs are defined up-front and measured against your legacy baselines established in Phase 1. Typical metrics include: query performance improvement (targeting 60–80% faster), cloud cost reduction (25–35% average), time-to-insight acceleration (2–3× faster), pipeline reliability (99.9% SLA), and data quality scores. We report monthly against these KPIs and run quarterly platform reviews to identify optimization opportunities. No vanity metrics—only business outcomes.

Let’s discuss your project

Share project details, like scope or challenges. We'll review and follow up with next steps.

.svg)

.svg)

.svg)

.svg)

.svg)

.webp)

.webp)

.webp)

.svg)