80%+ Reduction in Manual Job Data Handling Using an AI Platform

The client productized its healthcare recruitment services by replacing manual job data processing with an AI-powered platform. We built an LLM-driven microservice architecture that automates the ingestion, extraction, validation, and deduplication of thousands of unstructured job postings every day. The solution powers both web and mobile applications, significantly improving processing speed and data accuracy. As a result, the platform reduced operational costs by 20–40% while enabling scalable growth.

0.9

s

job posting processing time

80–95

%

reduction in manual job data handling

20–40

%

operational cost reduction

The client is a leading healthcare recruitment company in Australia, working with hospitals, government bodies, and large medical networks nationwide. Its platform connects healthcare professionals seeking jobs with recruitment agencies and providers looking to fill vacancies. As the business evolved from a service-led model into a digital platform, it launched a web service and mobile application supported by a scalable microservices architecture designed for reliability, growth, and future productization.

.svg)

Llama

GPT

.svg)

Postgres

Qdrant

Azure

THE CHALLENGE

Productizing a Service-Driven Recruitment Model

As the client built a national web platform to productize its recruitment services, they needed a robust backend capable of automating large-scale job data extraction from unstructured inputs. Thousands of job postings arrived daily via emails and documents in inconsistent formats, overwhelming manual workflows and limiting speed, accuracy, and scalability. The client required a modern, microservice-based backend to support reliable ingestion, processing, and transformation of job data at product scale.

.svg)

Automating Manual Job Data Processing

The client needed to replace time-intensive manual work with automated processing as part of productizing its recruitment service. Up to 5,000 daily emails arrived with job postings embedded in emails, PDFs, Word files, and forwarded messages—often containing multiple roles, inconsistent formats, and incomplete data.

.svg)

Accuracy, Consistency, and Duplication

Job data needed to meet strict accuracy thresholds (85–95%) while avoiding duplicate or conflicting listings across sources.

Errors or duplicated roles reduced trust in the platform and created downstream issues for agencies and healthcare providers.

.svg)

Performance and Reliability Constraints

Each job posting had to be processed in under 4 seconds with an error rate below 5%, even under peak daily load.

Latency or failures would directly impact time-sensitive healthcare staffing needs.

.svg)

AI Model Selection and Compliance

The system had to balance model accuracy, cost, and latency—while ensuring sensitive personal data was never exposed to LLMs.

Operating in a regulated healthcare environment added strict compliance requirements.

THE SOLUTION

LLM-powered microservice designed to ingest, extract, validate, and standardize job data

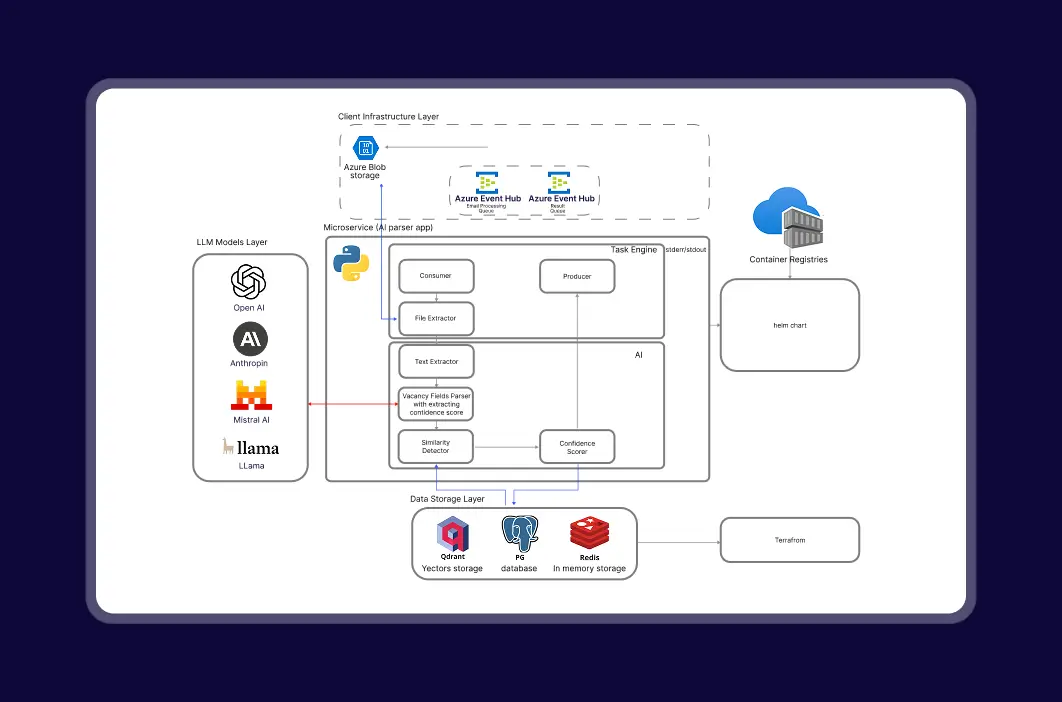

We built a dedicated, LLM-powered microservice designed to ingest, extract, validate, and standardize job data at national scale—ready for productization.

The solution automated the full ingestion pipeline, replacing manual workflows with a fast, compliant, and extensible AI-driven service that integrates directly into the client’s platform.

.svg)

Automated, LLM-Powered Backend for Job Data Processing

We built a dedicated backend microservice using schema-guided LLM extraction to process unstructured job inputs at scale. The system semantically parses emails, PDFs, and Word documents, accurately extracting 12–15 standardized fields per role—even when multiple job listings appear in a single message. Fully automated ingestion and structuring replaced manual workflows, enabling product-grade data consistency

.svg)

High-Performance Processing Architecture

The system was optimized to process each job posting in under 4 seconds, even at peak daily volumes. Asynchronous processing and lightweight validation pipelines ensured reliability under load.

.svg)

Semantic Deduplication & Data Quality Control

We introduced semantic deduplication logic to detect and eliminate duplicate or overlapping job postings across channels. A confidence-scoring mechanism evaluates extraction quality and consistency before data enters the platform.

.svg)

Model-Swapping, Compliance & Human Review

We implemented dynamic model-swapping logic (LLaMA → GPT → Mistral → Claude) when confidence scores fall below thresholds.

- PII is stripped before LLM invocation

- Low-confidence cases are routed to a human-in-the-loop review module

- Feedback loops continuously improve extraction quality over time

This ensured compliance while maintaining high accuracy and trust.

THE RESULT

From Manual Data Processing to a Product-Ready AI Platform

The client transformed a manual, service recruitment workflow into a product-grade backend powering its national web platform and mobile app. Job data ingestion, extraction, validation, and standardization are now fully automated, delivering consistent, high-quality listings across agencies and healthcare providers.

The microservice architecture improved speed, accuracy, and platform trust while significantly reducing operational effort and cost—creating a scalable foundation for continued product growth across Australia.

job posting processing time

reduction in manual job data handling

operational cost reduction

Cut Manual Data processing with an AI Platform

The Way We Deal with Your Task and Help Achieve Results

Latest publications

All publicationsLatest publications

All publicationsWe’d love to hear from you

Share project details, like scope or challenges. We'll review and follow up with next steps.

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)

.webp)